Let’s discuss the example of crop yield used earlier in the article, and plot the crop yield based on the amount of rainfall. Look at this graphic: We have plotted two points, (x1,y1) and (x2,y2). Say you have a bunch of data points $(x,y)$. Regression Equation The simplest linear regression equation with one dependent variable and one independent variable is: y mx + c. This question can also be answered from a linear algebra perspective. The regression line is the best-fit line through the points in the data set. I called these sumyi, sumxi, sumxi2, sumxiyi. We must getthe total sum of y, x, the sum x squared, and the sum of x times y. Next, assign a variable for all the numbers that we will need to calculate. We shouldn't overlook this point because a model that has a sound interpretation can quickly turn into one which makes little or no sense. Our goal for this section will be to write the equation of the best-fit. If there are no headers than fr will begin at row 1 and N will require no adjustment. So, to the answer the question: What is the difference between linear regression on y with x and x with y?, we can say that the interpretation of the regression equation changes when we regress x on y instead of y on x.

IN THE SIMPLE LINEAR REGRESSION EQUATION LET B HOW TO

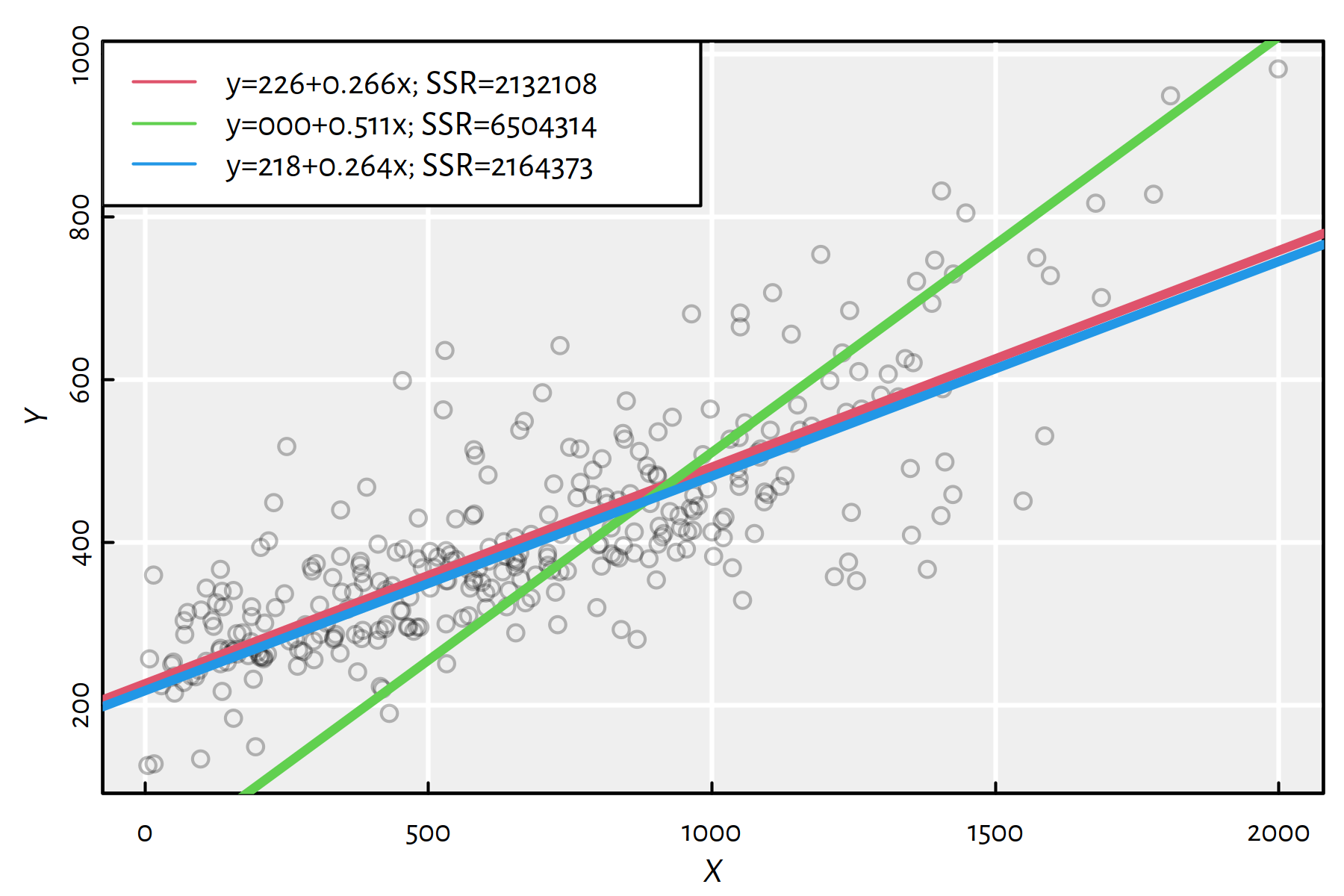

Know how to fit an estimated regression equation to a set of sample data based upon the least-squares method. I'm sure you can think of more examples like this one (outside the realm of economics too), but as you can see, the interpretation of the model can change quite significantly when we switch from regressing y on x to x on y. Understand the differences between the regression model, the regression equation, and the estimated regression equation. Then implicit in the formulation of the econometric equation is that we are saying that the direction of causality runs from wages to education. Traditionally, when we conduct a regression analysis, we find estimates of the slope and intercept so as to minimize the sum of squared errors. A loss function gives us a way to say how 'bad' something is, and thus, when we minimize that, we make our line as 'good' as possible, or find the 'best' line. Specifically, we must stipulate a loss function.

However, while this seems straightforward, we need to figure out what we mean by 'best', and that means we must define what it would be for a line to be good, or for one line to be better than another, etc. What you are trying to do in regression is find what might be called the 'line of best fit'. Given this framework, you see a cloud of points, which may be vaguely circular, or may be elongated into an ellipse. For simple linear regression, the least squares estimates of the model parameters 0 and 1 are denoted b0 and b1. The best way to think about this is to imagine a scatterplot of points with $y$ on the vertical axis and $x$ represented by the horizontal axis.